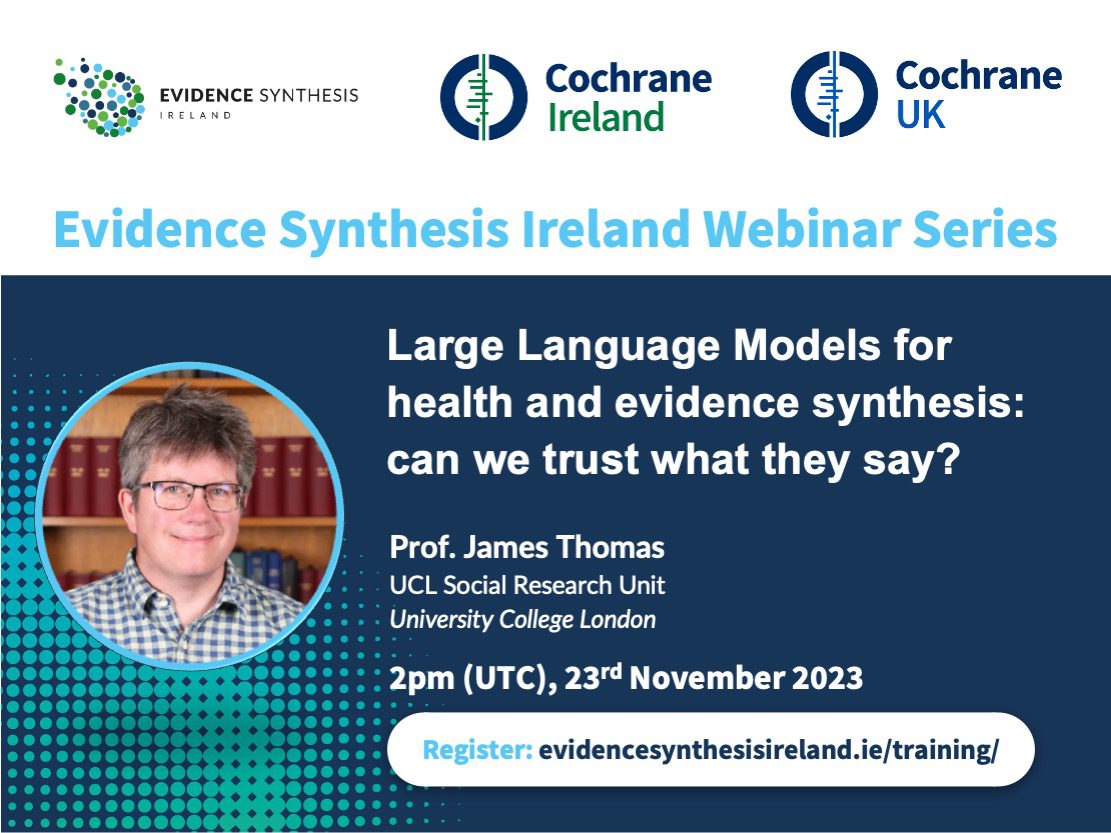

Following the launch of ChatGPT, interest in large language models (LLMs) has grown significantly. Large numbers of new tools, which take advantage of the human-like text generation capabilities of the new ‘generative’ generation of language models, have been released, and there is widespread expectation that ‘AI’ will soon start to undertake tasks that hitherto could only be undertaken by humans. Supporting healthcare decision-making through real-time evidence synthesis is one such task, and evidence synthesis tools which utilize LLMs have begun to be deployed. However, how do LLMs actually work? And are they reliable enough to be used for real-world decision-making? This webinar outlined how LLMs for healthcare actually generate the answers that they give. It then moved on to consider the use cases that have been advanced for their application, and consider how suited they are for these tasks.

Prof. James Thomas is based at the EPPI Centre and his research is centred on improving policy and decision-making through more creative use and appreciation of existing knowledge. It covers substantive disciplinary fields – such as health promotion, public health and education – and also the development of tools and methods that support this work conducted both within UCL and in the wider community. He has written extensively on research synthesis, including meta-analysis and methods for combining qualitative and quantitative research in ‘mixed method’ reviews; and also designed EPPI-Reviewer, software which manages data through all stages of a systematic review.