In this blog, Xuenan Pang, a PhD student with Evidence Synthesis Ireland at the University of Galway, outlines helpful tips for creating and enhancing a search strategy for evidence synthesis.

A comprehensive search underpins all subsequent stages of evidence synthesis. Designing an effective search strategy can be challenging, and many published reviews include suboptimal searches that risk omitting relevant evidence and introducing bias into the review findings. This blog helps novice researchers develop and refine search strategies. It offers seven practical tips grounded in established methods. These principles enhance search sensitivity (capturing relevant evidence) and specificity (excluding irrelevant results), strengthening the evidence base. Authors include Xuenan Pang, Kavita Kothari, Prof Declan Devane & Dr KM Saif-Ur-Rahman.

The following seven tips include practical considerations and examples.

Tip 1: Start with the most comprehensive database you can thoroughly explore.

The primary database you choose for building your search strategy matters because translation is easier from a more detailed to a less detailed indexing system. Key selection criteria:

- Indexing depth: Detailed vocabularies (e.g., Emtree > MeSH) provide more synonym options and easier translation

- Coverage breadth: Larger databases yield more comprehensive initial results

- Search functionality: Proximity operators and query management interfaces enhance strategy development

- Accessibility & familiarity: You need sustained access and time to familiarize with the interface thoroughly

Embase exemplifies these advantages when it is accessible; for example, research supports its extensive indexing and coverage. However, recent work by Rajit and colleagues highlights emerging alternative sources worth considering, including platforms like OpenAlex and Semantic Scholar.

Regardless of your primary choice, always supplement with searches in CENTRAL, and search both Embase and MEDLINE separately when possible due to indexing differences.

Tip 2: Use proximity operators to capture phrasing variations.

Proximity searching helps retrieve records where related terms appear close together, allowing terms to be matched within a specified word distance rather than as fixed phrases.

For example, a phrase search for “heart disease” may miss variations such as “disease of the heart” or “heart and vascular disease”. A proximity search (e.g., heart adj3 disease* in Ovid MEDLINE or heart NEAR/3 disease* in Embase) retrieves records where the terms appear within three words of each other.

This approach is suited to multi-word phrases that may vary in order or include intervening terms. Unlike the AND operator, which retrieves records even when terms are distant or unrelated in context, proximity searching maintains contextual relevance and improves search precision. Start with a moderate range (e.g., NEAR/3 or NEAR/5) and adjust based on the relevance and volume of results. A wider range improves sensitivity but may reduce specificity.

Note: Proximity syntax varies across databases and interfaces (e.g., NEAR/n, ADJn, N/n). So queries must be adapted when translating between platforms.

Tip 3: Apply the ‘NOT’ operator carefully to exclude irrelevant results.

The Boolean operator ‘NOT’ can improve precision by excluding records containing irrelevant terms or indexing. However, it should be used sparingly and with caution, as it may also remove relevant citations where the excluded terms appear in a different context.

Two scenarios illustrate useful applications of ‘NOT’:

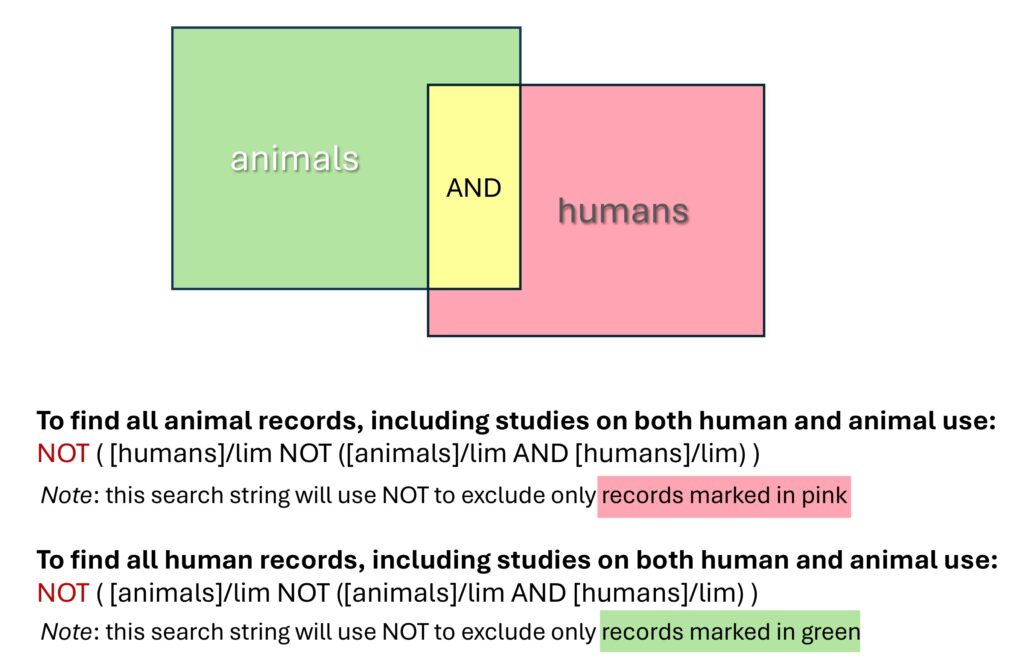

- Excluding animal-only records: For human-only reviews, filters in databases such as Embase and MEDLINE can be used to exclude animal-only records while retaining those indexed as both human and animal. (see Figure 1 for an Embase example)

Figure. 1 Visualisation of species-based NOT logic in Embase, showing how animal-only or human-only records can be excluded while retaining records indexed as both humans and animals.

- Testing candidate additions during search development: The NOT operator can be useful for comparing search strings to assess the number and type of results contributed by specific terms. For example, running “String 2” NOT “String 1” displays results unique to String 2, showing the contribution of additional terms. This helps determine whether to retain or exclude those terms based on the relevance and precision of the added records.

Tip 4: Use seed articles to validate and refine search strategies.

Seed articles (also known as benchmark or sentinel articles) are studies that already meet the inclusion criteria of the review. They are used to validate the search strategy by confirming that known relevant studies are retrieved.

Building your seed set:

- Identify 5–20 articles through preliminary scans, expert recommendations, or reference lists of related reviews

- Ensure diversity across key aspects of your question (e.g., different populations, interventions, outcomes, study designs)

- Include both recent publications and older seminal works

Validation process: Run your draft strategy and check whether seed articles appear in results. Aim to retrieve the vast majority (ideally all) of your seeds. When seeds are missed:

- Review their indexing terms and text words to identify gaps in your vocabulary

- Check if they’re indexed in the database you’re searching (some articles may only appear in certain databases)

- Assess whether the missed article is truly relevant—occasionally, seed article selection may need refinement

Tip 5: Caution with terminology variations.

Variations in terminology related to formatting, semantics, and spelling can significantly impact search results.

- Formatting variations: Hyphens, spaces, and quotation marks can affect results depending on the database. In PubMed, new born[tiab] finds the exact phrase “new born”, whereas new born:ti,ab in Embase retrieves any title/abstract containing “new” and “born”. Global Index Medicus (GIM) behaves similarly to Embase, automatically splitting the phrase into new AND born and further broadening retrieval.

- Semantic ambiguity: Some terms have different meanings across disciplines. For example, “neural network” may refer to machine learning models or biological systems, and “transfer learning” occurs in both artificial intelligence and education research. To reduce ambiguity, broad terms should be combined with context-specific keywords or indexing terms.

- Overly general terms: Generic terms such as ‘data analysis’ are common across disciplines and often reduce precision by retrieving many non-relevant records. More specific alternatives (e.g., ‘meta-analysis’) or combining general terms with context-specific terms to reduce noise. Proximity searching also enhances precision—for example, in Embase: ((‘meta analys*’ OR metaanalys*) NEAR/10 AI):ti,ab,kw

- Spelling and morphological variants: Account for American and British spellings (behavior/behaviour) and different word forms or tenses. Truncation or wildcards (behavio?r; abort* → abortion, abortions, aborting, aborted) retrieve all relevant variants and avoid missing records.

If a database’s handling of such variations is unclear, reviewers should test each form explicitly and compare results to ensure comprehensive retrieval.

Tip 6: Systematically translate search strategies across databases.

Evidence synthesis involves multiple databases, each with distinct syntax and interfaces. It is efficient to first build the search in the most familiar database, then translate it systematically to others. This ensures consistency and saves time compared to developing separate searches independently.

When translating searches, important elements to consider are:

- Field tags (e.g., [tiab] in PubMed vs .ti,ab. in Ovid MEDLINE);

- Proximity operators (e.g., NEAR/X in Embase Elsevier and ADJX in Ovid MEDLINE; some databases do not support proximity searching);

- Controlled vocabularies, such as MeSH and Emtree.

For example:

PubMed: heart-failure[tiab] OR cardiac-failure[tiab]

Ovid MEDLINE: heart-failure.ti,ab. OR cardiac-failure.ti,ab.

Embase: heart-failure:ti,ab OR cardiac-failure:ti,ab

Ensure that each translated search preserves the conceptual structure and Boolean logic of the original strategy. Compare line-by-line result counts as a quality-control check, but do not expect fixed result ratios across databases, because retrieval varies by topic, coverage, indexing, and platform behaviour. Special attention should be given to line structure, parentheses, and features unsupported in the target database. For example, Web of Science does not use controlled vocabulary, so translation should prioritise text-word searching in fields such as Topic rather than subject headings.

Tip 7: Use of large language models (LLMs) cautiously to support search strategy development and peer review.

Recent AI developments, such as general-purpose LLMs like ChatGPT (OpenAI) and Claude (Anthropic), may assist in developing and reviewing search strategies for evidence syntheses. However, they should not be relied upon as definitive sources or replacements for an information specialist. Access also varies by platform, subscription tier, and rate limits, so their practical usefulness may differ across users and institutions.

LLMs may help by proposing synonyms, acronyms, spelling variants, and related concepts; suggesting candidate-controlled vocabulary terms for manual checking against the current thesaurus; and drafting provisional Boolean structures that can then be edited by the reviewer. They may also support a preliminary PRESS (Peer Review of Electronic Search Strategies)-style check by flagging redundancies, missing concept blocks, inconsistent field tags, or possible syntax problems.

Early research indicates that ChatGPT-4 may assist in expanding search terms for systematic reviews, but manual verification is essential due to its limited domain awareness. Both OpenAI and Anthropic also explicitly acknowledge that hallucinations remain a persistent limitation of current LLMs. AI-generated terms should be critically assessed, as LLMs rely on training data patterns and may not align with current indexing standards or specialised terminology. Any use of AI tools—whether in developing or peer-reviewing search strategies—should be explicitly reported to maintain transparency and methodological rigour.

Summary

Developing a high-quality search strategy is an iterative process. This blog outlines seven practical approaches to improve sensitivity and specificity, supporting a robust and unbiased evidence base. Search effectiveness should not be judged solely by the number of results retrieved. Scanning results after each iteration helps assess relevance and detect whether irrelevant citations dominate. This quality check can identify issues that inform further refinement.

Further Reading

We strongly recommend that review authors consult established methodological guidance provided by Cochrane, the PRESS framework, and the Campbell Collaboration, and whenever possible, collaborate with an information specialist or librarian throughout the process.

Acknowledgements The authors thank Kerry Dwan (Centre for Evidence Synthesis in Global Health, Department of Clinical Sciences, Liverpool School of Tropical Medicine, Liverpool, UK) and Rachel Richardson (Methods Support Unit, Evidence Production and Methods Directorate, Cochrane, London, UK) for their valuable assistance in developing the content.

Declaration of generative AI and AI-assisted technologies in the writing process The initial draft of this blog was based on the authors’ prior experiences in developing and refining systematic search strategies, with key insights summarised by XP. ChatGPT (GPT-4.5) was used to support the authors’ drafting of portions of the narrative text and assisted with identifying relevant synonyms and terminology and reviewing the logical coherence of search strategies. All authors critically reviewed and extensively revised the manuscript through multiple iterations, ensuring that the content remained accurate, truthful, and aligned with the intended scope and objectives.